What is duplicate content and how does it affect your SEO?

Duplicate content is exactly what it sounds like. It is when the exact same text or highly similar content appears on more than one web page. It affects your SEO because search engines get confused about which version to rank. They end up wasting time crawling the same stuff and might split your ranking power across multiple pages instead of giving one page the push it needs.

That is the short answer.

But if you are wondering why your new service pages are not bringing in leads or why your website traffic has flatlined then we need to look a bit closer at what is happening under the hood. I have spent the last fifteen years looking at websites for Breakline and I can tell you this issue trips up almost everyone. It happens by accident mostly. A solicitor will copy their about us blurb onto every single location page. A roofer will have a page for roof repair and another for roofing repairs that say the exact same thing.

Suddenly Google has no idea which one is the important one. It just sees a mess.

So let us break down what is actually going on here.

Why search engines hate repeating themselves

Google wants to give people distinct information. If someone searches for a local dentist they want a list of different options with unique details. They absolutely do not want ten results that all read identically.

Think about it from their perspective. Crawling the internet takes a massive amount of computing power & money. When their bots hit your website they have a limited amount of time to look around. We call this a crawl budget. If they spend that budget reading the same paragraph about your emergency dental services on five different pages they are wasting resources. More importantly they might miss the actually important pages on your site.

It seems logical when you step back.

Search engines are basically huge filing cabinets. If you put five identical documents in five different folders the filing clerk is going to get incredibly annoyed. They will probably just throw four of them in the bin. That is essentially what happens to your duplicate pages.

I remember looking at a massive ecommerce site a few years ago. They had thousands of products and every single one had the same massive block of delivery information at the bottom.

The search bots were choking on it. They were spending so much time reading about shipping policies that they NEVER bothered to index the actual products. It was a disaster.

How exactly does it hurt your website

The biggest problem is something we call keyword cannibalisation. This is just a fancy way of saying your own pages are eating each other. When you have two identical pages competing for the same search term google has to pick a winner. Often it picks the wrong one.

Let us say you run a roofing company. You have a main service page for flat roofs. Then you write a blog post about flat roofs that says almost the exact same thing. Google might decide to show the blog post in the search results instead of your main service page. The user clicks the blog post reads it and leaves without ever seeing your contact form. You lost a lead because you competed against yourself.

It gets worse when we talk about links.

Backlinks are links from other websites pointing to yours. They act like votes of confidence. If half of your suppliers link to page A and the other half link to page B you have split your voting power down the middle. Neither page is strong enough to rank well. If all those links pointed to one single page it would probably shoot straight up the rankings.

This is why SEO professionals get so obsessed with keeping things tidy. A messy website with duplicated text everywhere is like a leaky bucket. You are losing ranking power through a dozen tiny holes.

The hidden cost of wasted crawls

I think people forget that Google does not index everything instantly. It has to discover the page crawl it and then decide if it is worth keeping. If your site is bloated with duplicate content you are making this process take ten times longer than it should. Your fresh new content gets stuck in a queue behind hundreds of useless copies.

This is especially painful for large websites. If you have thousands of pages you need every single crawl to count.

I saw a client recently who could not understand why their new blog posts were taking months to show up in search. We looked at their server logs and found Google bot spending ninety percent of its time crawling thousands of printer friendly versions of old articles. It was just spinning its wheels. We blocked those pages and suddenly their new content started indexing within days. It is all about efficiency.

Internal versus external copying

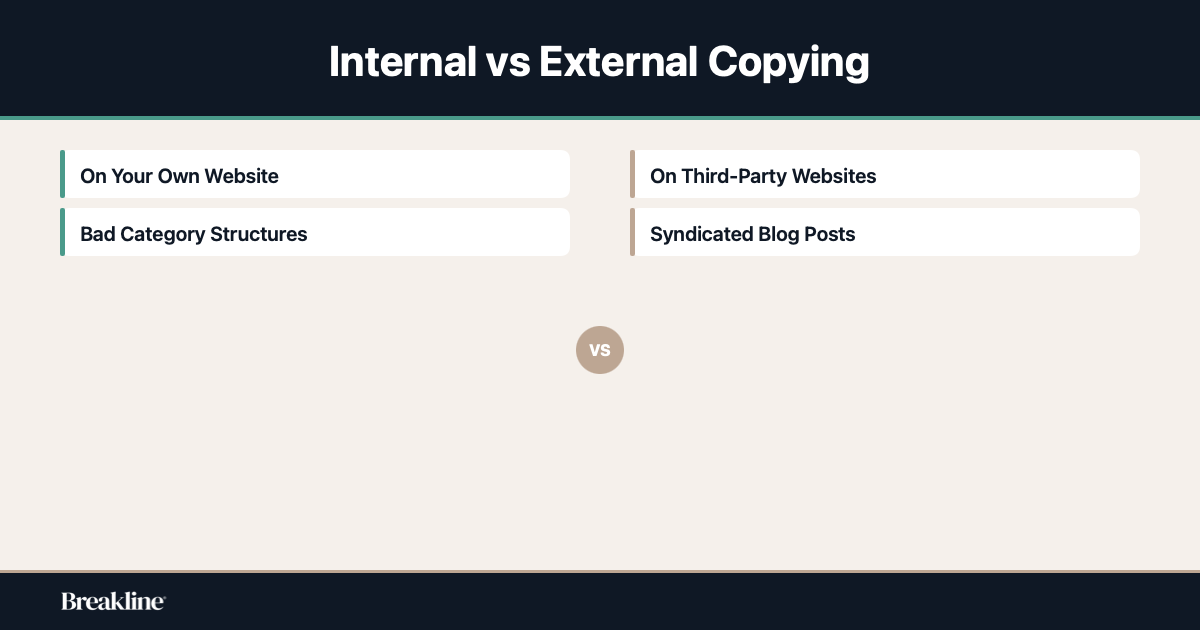

There are two main flavours of this problem. Internal duplicate content happens entirely on your own website while external duplicate content involves other websites.

Internal issues are usually your own fault. Maybe your web developer created a weird category system that duplicates everything. Maybe you have a www and a non www version of your site running at the same time. This means literally every page on your site exists twice. I see this all the time and it is a quick fix but it causes absolute havoc if left alone.

External copying is trickier.

Sometimes you might syndicate your blog posts to another platform to get more eyeballs on them. Or maybe you are an online retailer using the exact same product descriptions the manufacturer gave you. Guess what. A hundred other retailers are using those exact same descriptions too.

Google has no reason to rank your product page over the massive national retailer using the same text. You have to write your own stuff to accomodate your specific audience. It takes time but it is the only way to stand out.

I think people get really caught up worrying about competitors stealing their content. Yes it happens but mostly the call is coming from inside the house.

The myth of the duplicate content penalty

Let me clear something up right now. Google does not have a specific penalty for duplicate content. You will not get a manual action or be banned from the search results just because you repeated yourself.

People panic about this constantly. They read some outdated forum post and think their whole business is going to disappear overnight because they used the same footer on every page.

Google has actually said explicitly that they do not penalise sites for this unless the intent is clearly deceptive. If you are trying to manipulate the results by spinning thousands of garbage pages they will drop the hammer. But if you are just a normal business with some messy website architecture they simply pick one version of the page to index. They ignore the rest.

But ignoring the rest IS the problem.

Just because it is not a formal penalty does not mean it does not hurt. If Google ignores the page you actually wanted customers to see you are losing money. It is a performance issue rather than a punishment. You are just making it really hard for the search engine to do its job properly. I suppose it is like turning up to a job interview in a clown suit.

It is not illegal. You are just probably not getting the job.

Real examples from normal businesses

Let us look at how this actually plays out in practice. I was talking to a solicitor recently who wanted to rank for family law in five different towns. They created five seperate pages. Family law in town A and family law in town B.

The problem was they just copied and pasted the exact same text and swapped out the town name. Google saw straight through it. It indexed the first page and completely ignored the other four. The solicitor was furious but the search engine was just doing its job. There was no distinct information there.

You have to provide unique value.

Another classic example is the local plumber who has a services page that lists everything they do. Then they have individual pages for boiler repair and pipe fitting. But the individual pages just repeat the exact same paragraphs from the main services page.

It is totally fine to have a bit of overlap. You do not need to rewrite your company history every time you mention it. But the core meat of the page needs to be fresh. If I am looking for someone to fix my boiler I want to read specifically about boiler repairs.

I want to know what parts you carry and how fast you can get here. If you just give me the same generic corporate blurb I am hitting the back button.

Finding the copies hiding on your site

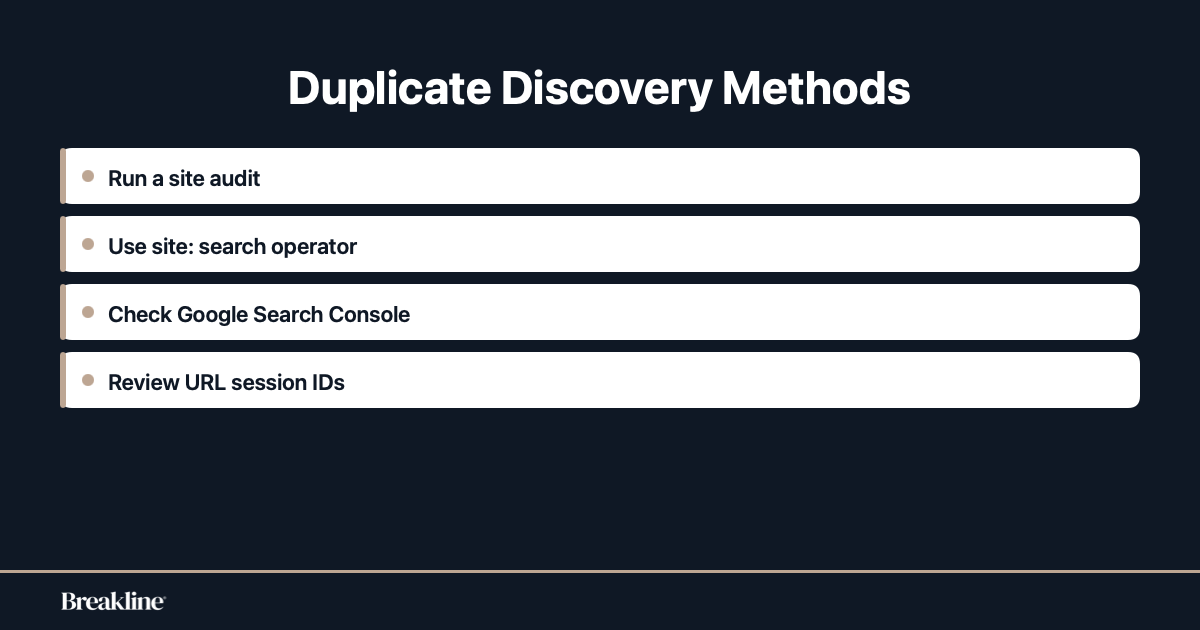

So how do you actually find this stuff. You can not just click through every single page on your website hoping to spot patterns. Well you could but you would probably lose your mind.

The easiest way is to use a tool. If you have a decent marketing budget you can run a site audit using something like Semrush. It will literally spit out a report telling you exactly which pages have duplicate content issues. It flags them up in bright red so you can not miss them.

But you do not need expensive software to spot the obvious things.

Go to Google and type in site yourwebsite.com. This shows you exactly what Google has indexed. Click through the results. If you start seeing weird URLs with a bunch of random numbers and question marks at the end you probably have a session ID problem. This is when your website software creates a unique URL for every single visitor but the page content is exactly the same.

You should also check your Google Search Console. It is free. Look at the Pages report to see what is going on.

If you see a massive spike in pages that are crawled but not indexed you have got some cleaning up to do. Sometimes you just need to look at your site structure objectively. Ask yourself if you really need a separate page for every single minor variation of your service. Often it is better to have one really strong detailed page than five weak ones.

Fixing the mess without breaking things

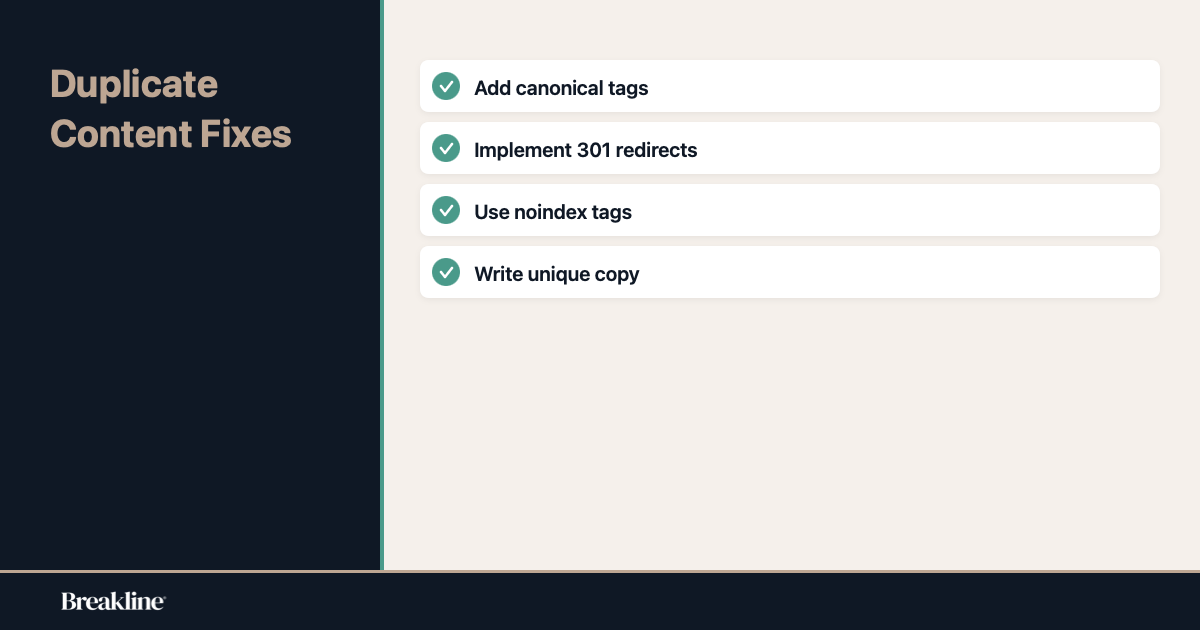

Fixing duplicate content is usually pretty straightforward once you know where it is. The most common tool we use is called a canonical tag. It is a tiny piece of code that sits invisibly on your web page.

Think of it like a sticky note for search engines. If you have three similar pages you put a canonical tag on the two less important ones. The tag basically says hey Google I know this looks like a copy so please ignore this and look at the main page instead. It consolidates all the ranking signals into your preferred page.

It is elegant and it works.

Another option is the trusty 301 redirect. If you have two pages that are literally identical and you only need one of them just delete the bad one and redirect the URL to the good one. Anyone who clicks an old link to the deleted page gets automatically forwarded to the right place. Search engines pass the link equity along too. It is a very clean solution.

Sometimes you just need to use a noindex tag. This tells search engines to completely ignore a page. It is perfect for things like internal search result pages or those printer friendly versions I mentioned earlier. You want humans to use them but you definitely do not want them showing up in Google.

The hardest fix is when you just have thin copied text across your service pages. There is no technical trick for that. You just have to sit down and write better unique content.

What happens if you just ignore it

A lot of business owners ask me if they can just leave things as they are. They figure if there is no penalty then why bother spending money to fix it. I understand the logic.

But doing nothing is a choice that costs you money. Every day your pages are competing against each other is a day your competitors are pulling ahead. They are consolidating their ranking power while yours is diluted across a dozen weak pages. Over time that gap just gets wider.

It is like ignoring a slow puncture in your tyre. You can keep driving for a while but eventually you are going to be stuck on the side of the road.

I had a roofing client who ignored their duplicate location pages for three years. They thought it was fine because they still got a few calls a month. When we finally consolidated those pages into one strong location page their traffic tripled in six weeks. They had been artificially suppressing their own rankings for years just to save a few hours of work. It is never worth it.

The Bottom Line

I know this stuff can feel overwhelming when you are just trying to run a business. You want to fix roofs or help clients with legal troubles. You do not want to spend your evenings worrying about canonical tags and crawl budgets.

But the reality is your website is your digital storefront. If you have five identical signs pointing in different directions people are going to get confused. Search engines are exactly the same. They want a clear path to the best information.

Duplicate content is rarely a malicious thing. It is almost always just a byproduct of a growing business adding new pages over time without a clear plan.

The good news is it is entirely fixable. You do not need to burn the whole site down and start again. You just need to do a bit of pruning. Consolidate the weak pages. Redirect the exact copies and write some fresh text for the pages that matter most.

I have seen sites double their organic traffic just by cleaning up their duplicate issues. When you stop making Google guess what you want it to do it tends to reward you. It really is that simple.

Take an hour this week to look at your own site. Try that site search trick I mentioned. You might be surprised by how much clutter is hiding in plain sight. And if you find a mess well at least you know why your phone hasn’t been ringing as much as it should be.